Evolution of AI Bot Blocking on News Websites

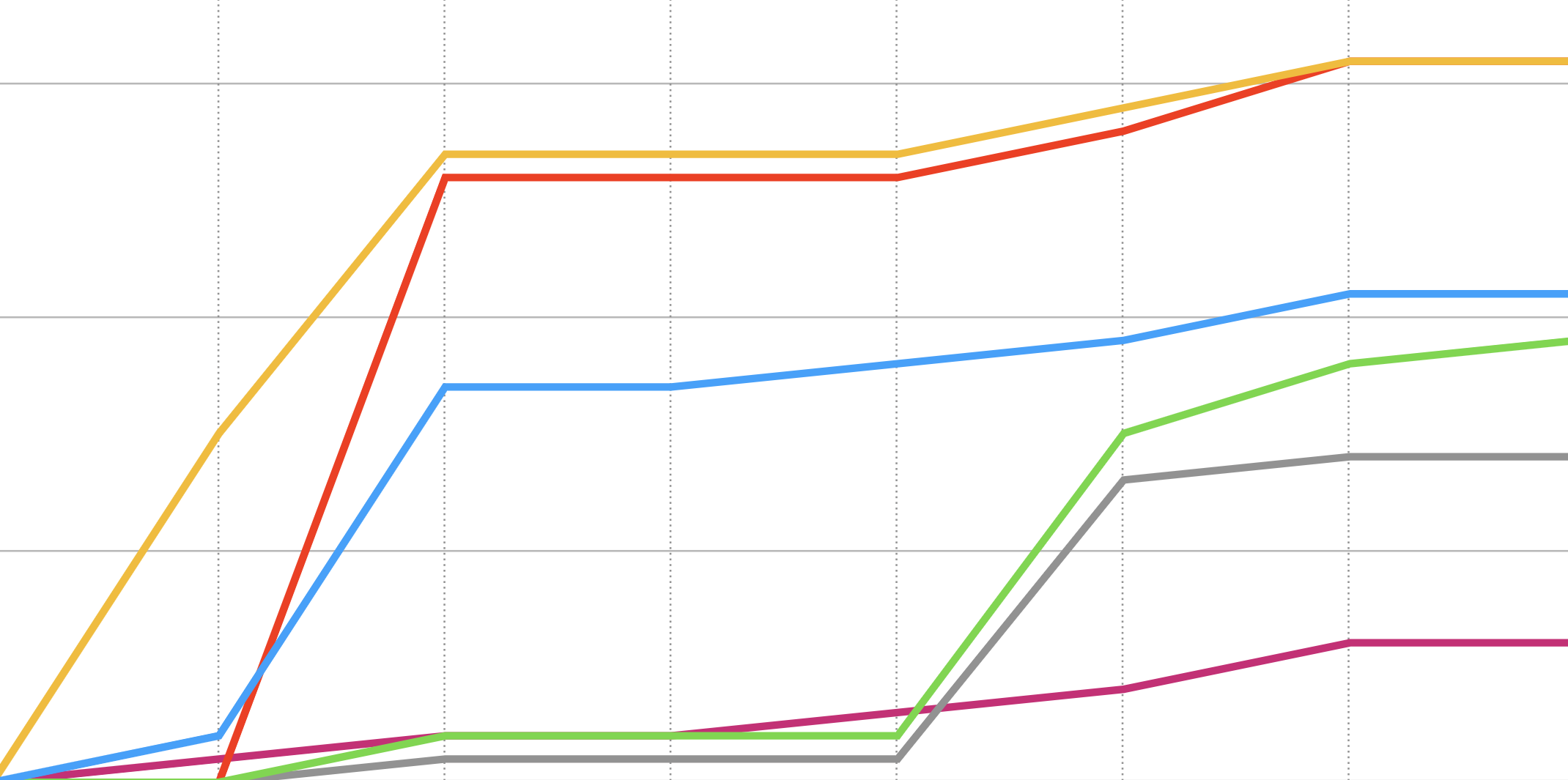

The training of Large Language Models and their subsequent use in AI chatbots require access to vast amounts of data, often scraped from various online sources, including news websites. This data is crucial for the bots to understand and generate human-like text. However, this practice of data scraping seems to have raised concerns among news websites. AI systems like ChatGPT can be seen as potential competitors in the news business. When chatbots are asked about current events, they can generate answers that rely on data from news websites. While it fuels the advancement of AI, it also poses potential issues such as copyright infringement and loss of ad revenue. ...