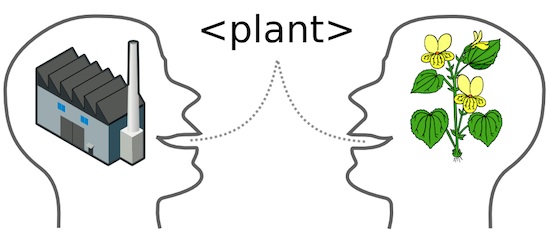

A lightweight semantic interoperability framework for countries and large organizations (and small ones)

This post is a summary of some ideas for a lightweight semantic interoperability framework It is mainly a composition of existing open standards to form a framework for organisations to be able to ensure that semantic and technical descriptions stay connected over time. The idea is to provide a framework that allows for an increasing semantic interoperability emerging over time without having a large centralized organisation defining vocabularies. Main points: The benefits appear when a vocabulary is used. An important factor is how a vocabulary and its parts can be published, discovered and referenced. There needs to be one and only one vocabulary artifact. The vocabulary artifact must be machine processable to allow aggregation and automatic generation of other artifacts. Background The need for interoperability arise in scenarios with many contributing actors. In the old days this was solved by hiring a (large) consulting organization that (hopefully) had experience from glueing together many products from their vast product portfolio. Everyone involved was happy if it worked. Being able to swap out a part of this system or connect it to a third party that had a different technical platform was considered a risk. ...